The concept of Digital Sovereignty — the collective ability of states and communities to shape, govern, and safeguard the digital infrastructures, data, and standards that underpin their societies — is rapidly becoming a global imperative. As real-world incidents have shown, external control over fundamental digital infrastructure, data hosting, and service providers can create profound vulnerabilities, such as the October 2025 outage of AWS’s US-East-1 Data Centre Cluster.

For countries seeking to strengthen their digital autonomy and reduce strategic dependencies, a focus on building critical capacities and embracing public-interest, open source solutions is essential. Digital Public Goods (DPGs) - open source software, open data, open AI systems and open content collections - are a vital piece of the puzzle in this quest, providing foundational, open, and accessible components that nations, communities and people can truly own, adapt, and control.

Artificial Intelligence and the underlying technology stack on which user-facing applications, such as chatbots and agents, are built, is one of the key focus areas to enhance strategic autonomy and resilience as core components of digital sovereignty.

The case for openness in AI

As AI becomes increasingly ubiquitous in our daily lives, poised to power everything from healthcare diagnostics to public service delivery, the way models are trained and systems are built and deployed is becoming an essential question of trust, safety, accountability, and sovereignty.

Take the public sector: governments around the globe are compelled to integrate AI into their processes to make public services more efficient, effective, and responsive to citizen needs by automating processes, optimising resource allocation, supporting decision-making, and personalising services and citizen participation.According to an OECD report, 67% of OECD countries utilise AI to improve public service delivery.

To ensure accountability, transparency, and fairness, while enabling long-term economic benefits and control over one’s infrastructure, AI systems must be open, trustworthy, and free from proprietary lock-in or external jurisdiction.

That’s where AI digital public goods come in. According to the UN’s definition of DPGs, these products must be open source, do no harm by design and help attain the Sustainable Development Goals (SDGs). Achieving this designation is not merely about using open source licenses; it is a commitment to radical transparency and safety that directly enables control and trust. Hence, AI DPGs are a crucial component of sovereign AI. Moreover, open source and open science practices, including the sharing of papers, code, and model components, have driven significant progress in AI development over the past few years and

form the basis for economic growth and competitiveness.

.

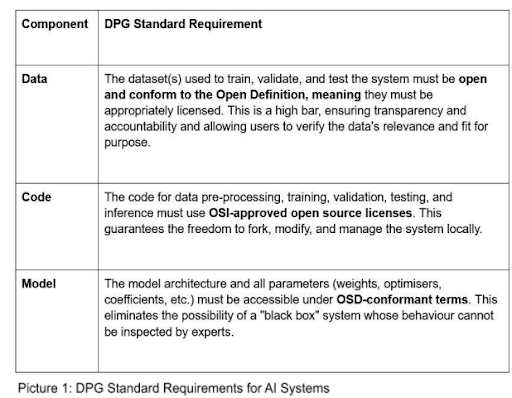

To be recognised as a DPG by the Digital Public Goods Alliance, an AI system must adhere to the DPG Standard., which dictates strict technical requirements to ensure that implementers, such as governments, companies and civil society, can thoroughly inspect,

adapt, and reuse the technology without hidden dependencies.

The DPG standard for AI systems: an aspiration for openness

It is essential to acknowledge that the DPG Standard for AI systems extends beyond the open source AI definition provided by the Open Source Initiative (OSI) and is aspirational in nature. The requirement for openness across all components, especially the underlying training data, sets a very high bar. We recognise that few AI solutions currently meet this comprehensive standard.

However, this high bar is intentional. It represents the gold standard for public interest and trustworthiness. Our goal is not to exclude, but to encourage developers to build more openly. By adhering to the DPG Standard, even if a solution only meets some indicators today, developers contribute to a future where AI systems are truly shared digital assets, enabling greater digital sovereignty and realising public interest objectives worldwide. In addition, the high bar is also intended to strengthen the open data movement, making a case for the critical importance of open data in building trustworthy AI and encouraging the creation of open tools and datasets that power public interest AI.

The technical pillars of AI DPGs

To be recognised as an AI DPG, the DPG Standard requires openness across all core components of an AI system. The following must be provided:

Documentation and responsible AI: building trust and control in AI DPGs

Building trustworthy and auditable AI DPGs requires two non-negotiable prerequisites that are embedded in the DPG Standard: (1) transparent documentation and (2) do no harm by design through responsible AI practices. Transparent documentation enables reuse by requiring clear formats such as model cards and data sheets that cover the model overview, intended use, and known limitations (including biases and weaknesses), and provide detailed data provenance (source and quality).

Mandatory responsible AI practices ensure ethical compliance, aligning with frameworks such as UNESCO's Recommendation on the Ethics of Artificial Intelligence. This pillar focuses on risk mitigation and harm prevention by requiring disclosure on proportionality and impact on people, steps to address bias and fairness, validation tests and guardrails, and transparency regarding the model's logic and decision-making processes.

Sovereign AI beyond DPGs - the AI stack

Achieving genuine sovereign AI goes beyond models and systems. It also requires confronting the deep concentrations of power at the base of the "AI stack," namely the centralised cloud infrastructure and the critical hardware oligopoly. The dominance of a few hyperscalers (Amazon, Microsoft, Google) in cloud computing, coupled with the overwhelming market share of companies like Nvidia in specialised chips (GPUs), creates a precarious dependence for any nation's digital future.

Incidents like major cloud outages reveal a democratic deficit in relying on Big Tech for core digital functions that power our collective ability to interact, share knowledge, and more. Furthermore, this consolidation of influence ensures that AI systems reflect the economic incentives of their creators, often eroding public oversight and democratic accountability. True sovereign AI at the infrastructural level is only possible by decoupling public interest technology from this proprietary infrastructure and building demand-driven public alternatives.

In response, the European Commission (EC) has launched policy initiatives, such as the AI Continent Action Plan and InvestAI Facility, which foresees investments in up to five AI Gigafactories (large-scale compute facilities focused on the development of highly capable AI models) as a step toward securing compute capacity and developing competitive European models. However, many questions remain, such as access management and conditionalities attached to using public computers.

The broader push for sovereign AI from the European Commission’s side, with the ApplyAI Strategy, focuses on applications and seeks to mobilise resources comparable to those of major commercial AI labs. However, critics note that this approach to European sovereign AI risks mirroring the priorities of dominant commercial actors and focuses heavily on large-scale investment without a clear, explicitly defined public interest focus, potentially deepening dependencies rather than solving the problem of concentrated power. Therefore, policy initiatives at the regional and national levels must prioritise public interest use cases and embed openness requirements, as reflected in the DPG Standard, into these investments to ensure they serve the common good and contribute to building lasting digital autonomy and resilience.

In a holistic view, any country aiming to build sovereign AI should also ensure that the enabling conditions are met, including regulatory frameworks that protect the fundamental rights of citizens and mitigate AI risks, solid institutions to enforce such regulations and ensure the security of such critical infrastructures, as well as an AI-literate population.

About the Author

Lea Gimpel is the Director of Policy and AI Lead at the Digital Public Goods Alliance (DPGA) with almost 15 years of experience at the intersection of technology policy and international development. Previously, she co-led the AI flagship initiative of the German development cooperation "FAIR Forward," a global effort to democratise AI development through open datasets and models.

1. https://www.digitalpublicgoods.net/digital-public-goods (accessed October 31, 2025)

2.OECD (2025), Governing with Artificial Intelligence: Ta crucial componenthe State of Play and Way Forward in Core Government Functions, OECD Publishing, Paris https://doi.org/10.1787/795de142-en

3,Linux Foundation, The State of Sovereign AI, https://www.linuxfoundation.org/hubfs/Research%20Reports/lfr_sovereign_ai_090525a.pdf?hsLang=e n (accessed October 31, 2025).

4. http://www.digitalpublicgoods.net/standard (accessed October 21, 2025)

5. https://git.new/dpg-wiki (accessed October 31, 2025).

6. Zuzanna Warso (2025), What does Europe Need and How to Achieve it, Tech Policy Press, https://www.techpolicy.press/building-digital-sovereignty-what-does-europe-need-and-how-to-achieveit/ (accessed October 21, 2025)